Posts Tagged “testing”

TeamCity 5.1 + git + fix here + fix there

Finally.

I have a tiny project that need updating – the idcc.co.il website (*).

Source control I setup a new git repository on my home machine (using smart-http from git-dot-aspx, a different story for a different post) and now I got to setup a build server.

Team city Downloaded TeamCity 5.1.4 (the free, Professional version). Installation was mostly painless, except that the build-agent properties setter got stuck and I had to manually edit the build-agent conf file. No biggie – just RTFM: http://confluence.jetbrains.net/display/TCD5/Setting+up+and+Running+Additional+Build+Agents#SettingupandRunningAdditionalBuildAgents-InstallingviaZIPFile.

git integration Git is supported out of the box. I did have a minor glitch – the git-dot-aspx thing is very immature, and it simply did not work. Luckily the repository is located on the same machine as the build server and build agent so I simply directed the VCS root to the location on the filesystem.

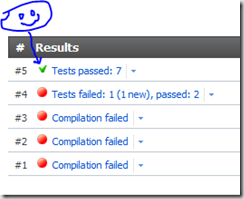

building I had a few glitches with MSBuild complaining about missing project types (the webapplication targets file) – that’s the first three failures you see in the snapshot. I then copied the targets from somewhere else and I got the bulid running

Fixing the tests only to find that I have a broken test. Since when I created the initial website, I never set a build server, thus some changes I later introduced caused a minor regression. Now that I have a proper build server it (hopefully) won’t happen again.

- yes, Ohad and myself are setting up a second idcc conference. It is still in stealth mode, but keep you tabs of this. An announcement is planned for early October.

TDD in IKEA

http://altinoren.com/PermaLink,guid,a530ffb1-34d1-49f8-a093-888d6354e91a.aspx

Usually I do not just link to another post, but this one is simply hilarious, and I’d really want a permalink I could easily find again in the future. A post on my own blog is a great way to achieve that…

Can you spot the bug?

Can you spot what will cause the following NUnit test not to run on TeamCity 4.5?

[TestFixture("Testing some cool things")]

public class CoolThingsFixture

{

[Test]

public void When_Do_Expect()

{

Assert.That(2, Is.EqualTo(1+1));

}

}

hint: TeamCity list it with the ignored tests, yelling “No suitable constructor was found”

AutoStubber to ease stub based unit tests

Tired of setting up stubs for your class under test?

Tired of compile errors when you add one more dependency to a class?

The AutoStubber to the rescue.

Given

interface IServiceA

{

string GetThis(long param);

}

interface IServiceB

{

Do DoThat(string s);

}

class MyService

{

public MyService(IServiceA a, IServiceB b) { ... }

...

}

...

you can write;

var service = new AutoStubber.Create();

// Arrange

var theString = "whatever";

service.Stubs().Get.Stub(x=>x.GetThis(0).IgnoreArguments().Return(theString);

// Act

service.Execute();

// Assert

service.Stubs().Get.AssertWasCalled(x=>x.DoThat(theString);

The code for AutoStubber:

- Mind you – it’s not the prettiest, but it gets the job done

public class AutoStubber<T> where T : class

{

static readonly Type TypeofT;

static readonly ConstructorInfo Constructor;

static readonly Type[] ParameterTypes;

static readonly Dictionary<object, AutoStubber<T>> Instances = new Dictionary<object, AutoStubber<T>>();

static AutoStubber()

{

TypeofT = typeof(T);

Constructor = TypeofT.GetConstructors().OrderByDescending(ci => ci.GetParameters().Length).First();

ParameterTypes = Constructor.GetParameters().Select(pi => pi.ParameterType).ToArray();

}

public static AutoStubber<T> GetStubberFor(T obj)

{

return Instances[obj];

}

bool _created;

public T Create()

{

if (_created)

throw new InvalidOperationException("Create can only be called once per AutoStubber");

_created = true;

return Instance;

}

readonly Dictionary<Type, object> _dependencies = new Dictionary<Type, object>();

private T Instance { get; set; }

public AutoStubber()

{

var parameters = new List<object>(ParameterTypes.Length);

foreach (var parameterType in ParameterTypes)

{

var parameter = MockRepository.GenerateStub(parameterType);

parameters.Add(parameter);

_dependencies[parameterType] = parameter;

}

Instance = (T)Constructor.Invoke(parameters.ToArray());

Instances[Instance] = this;

}

public TDependency Get<TDependency>()

{

return (TDependency)_dependencies[typeof(TDependency)];

}

}

public static class AutoStubberExtensions

{

public static AutoStubber<T> Stubs<T>(this T obj)

where T : class

{

return AutoStubber<T>.GetStubberFor(obj);

}

}

I know there is the AutoMockingContainer, and various other stuff out there, but this thing just was very natural to me, it uses a very simple API (do not need to keep reference to the Container), and took me less than an hour to knock off.

An enhancement I consider would be to allow setting pre-created values to some of the parameters. But meanwhile I did not happen to need it.

A very nice Unit Testing 101 presentation

At Yaron Nave’s blog, which is an excellent reading for all things WCF.

grab it at: http://webservices20.blogspot.com/2009/06/unit-tests-presentation.html

Unit Testing in PROLOG - take 2

I’ve refined my “unit testing framework” a bit, to make it less awkward.

the code:

test(Fact/Test):- current_predicate_under_test(Predicate), retractall(test_def(Predicate/Fact/Test)), assert(test_def(Predicate/Fact/Test)). setup_tests(Predicate) :- retractall(test_def(Predicate/_/_)), assert(current_predicate_under_test(Predicate)). end_setup_tests:- retractall(current_predicate_under_test(_)). run_tests :- dynamic(tests_stats/2), bagof(P/Tests, bagof((Fact/Test), test_def(P/Fact/Test), Tests), TestsPerPredicate), run_tests(TestsPerPredicate, Passed/Failed), write_tests_summary(Passed/Failed). run_tests(TestsTestsPerPredicate, TotalPassed/TotalFailed) :- run_tests(TestsTestsPerPredicate, 0/0, TotalPassed/TotalFailed). run_tests([], Passed/Failed, Passed/Failed):-!.

run_tests([P/Tests|Rest], Passed/Failed, TotalPassed/TotalFailed):- write('testing '), write(P), foreach_test(Tests, PassedInPredicate/FailedInPredicate), write(' passed:'), write(PassedInPredicate), (FailedInPredicate > 0, write(' failed:'), write(FailedInPredicate) ; true), nl, Passed1 is Passed + PassedInPredicate, Failed1 is Failed + FailedInPredicate, run_tests(Rest, Passed1/Failed1, TotalPassed/TotalFailed). foreach_test(Tests, Passed/Failed):- foreach_test(Tests, 0/0, Passed/Failed). foreach_test([], Passed/Failed, Passed/Failed):-!. foreach_test([Fact/Test|Rest], Passed/Failed, NewPassed/NewFailed):- assert((run_test:-Test)), ( run_test, !, NextPassed is Passed + 1, NextFailed is Failed ; NextFailed is Failed + 1, NextPassed is Passed, write('FAIL: '), write(Fact), nl ), retract((run_test:-Test)), foreach_test(Rest, NextPassed/NextFailed, NewPassed/NewFailed). write_tests_summary(Passed/0) :- !, nl, write(Passed), write(' tests passed :)'), nl. write_tests_summary(Passed/Failed) :- nl, write(Passed), write(' tests passed, however'), nl, write(Failed), write(' tests failed :('), nl. reset_all_tests:- retractall(test_def(_/_/_)).

the usage:

:- setup_tests('conc/3').

:- test('empty and empty returns empty'/( conc([], [], []))).

:- test('empty and nonempty returns L2'/( conc([], [1,2], [1,2]))).

:- test('nonempty and empty returns L1'/( conc([1,2], [], [1,2]))).

:- test('nonempty and nonempty returns L1 concatenated with L2'/( conc([1,2], [3,4], [1,2,3,4]))).

:- end_setup_tests.

my current test output:

| ?- run_tests.

testing conc/3 passed:4testing create_list/3 passed:2testing empty_pit/5 passed:1testing get_opposite_pit/2 passed:2testing in_range/2 passed:2testing is_in_range/2 passed:4testing put_seeds/5 passed:3

18 tests passed :)yes

Unit testing in PROLOG

Finally I’m sitting down to be done with my Computer Science degree. I’ve been studying in the Israeli Open University starting 2003, while working full time and more. Over than two years ago I reached the point of having literally no time at all to finish it up, so I left it to be with only two final projects to complete, present and defend.

The first one is to write a simple AI enabled game (using depth delimited alpha-beta algorithm variation) , in PROLOG.

Back when I took that course, the whole paradigm was too strange to me. I’ve been doing procedural and OO coding for years, and the look of the programs just looked …. wrong.

Nowadays that I developed a lot of curiosity into declarative languages like Erlang and F#, (and being a much better and way more experienced developer) I can relate to that type of coding more easily.

So, dusting the rust of two year of not touching it at all, I sat down today to start working on that project (delivery is next month), I started with writing down a small helper for running unit tests on my code.

Ain’t pretty, but it serves both the need to test my code, and the need to re-learn the language:

run_tests :-

dynamic([ tests_passed/1, failing_tests/1, total_tests_passed/1, total_failing_tests/1 ]),

assert(tests_passed(0)),

assert(failing_tests([])),

assert(total_tests_passed(0)),

assert(total_failing_tests([])),

bagof( (Module/Predicate, Tests),

tests(Module/Predicate, Tests),

TestDefinitions),

run_tests_definitions(TestDefinitions),

retract(total_tests_passed(TotalPassedAtEnd)),

retract(total_failing_tests(TotalFailedAtEnd)),

len(TotalFailedAtEnd, TotalFailedAtEndCount),

write('summary:'),

nl,

write('Passed: '),

write(TotalPassedAtEnd),

write(' Failed: '),

write(TotalFailedAtEndCount),

nl,

nl,

(TotalFailedAtEndCount> 0, write_fails(TotalFailedAtEnd) ; write('Alles Gut'), nl).

run_tests_definitions([]) :-

!.

run_tests_definitions([(Module/Predicate, Tests)|T]) :-

write('module: '),

write(Module),

write(' predicate: '),

write(Predicate),

write(' ... '),

run_tests(Tests),

retract(tests_passed(Passed)),

retract(failing_tests(Failed)),

assert(tests_passed(0)),

assert(failing_tests([])),

len(Failed, FailedCount),

write('Passed: '),

write(Passed),

write(' Failed: '),

write(FailedCount),

nl,

retract(total_tests_passed(TotalPassed)),

retract(total_failing_tests(TotalFailed)),

NewTotalPassed is TotalPassed + Passed,

conc(Failed, TotalFailed, NewTotalFailed),

assert(total_tests_passed(NewTotalPassed)),

assert(total_failing_tests(NewTotalFailed)),

run_tests_definitions(T).

write_fails([]) :-

!.

write_fails([H|T]) :-

write_fails(T),

write(H),

write(' failed'),

nl.

run_tests([]) :-

!.

run_tests([H|T]) :-

run_test(H),

run_tests(T).

run_test(Test) :-

call(Test),

!,

tests_passed(X),

retract(tests_passed(X)),

NewX is X + 1, assert(tests_passed(NewX)).

run_test(Test) :-

failing_tests(X),

retract(failing_tests(X)),

NewX = [Test|X],

assert(failing_tests(NewX)).

% Asserts

assert_all_members_equal_to([], _).

assert_all_members_equal_to([H|T], H) :-

assert_all_members_equal_to(T, H). this code is allowing me to define my tests like the following:

tests(moves/change_list, [

change_list__add_first__works,

change_list__add_middle__works,

change_list__add_last__works,

change_list__empty_first__works,

change_list__add_middle__works,

change_list__add_last__works]).

change_list__add_first__works :-

L = [1,1,1],

change_list(L, L1, 1, add),

L1 = [2,1,1].

change_list__add_middle__works :-

L = [1,1,1],

change_list(L, L1, 2, add),

L1 = [1,2,1].

...invoking the tests is as simple as the predicate:

:- run_tests.and the current output from my project is:

module: utils predicate: in_range ... Passed: 4 Failed: 0

module: utils predicate: create_list ... Passed: 2 Failed: 0

module: moves predicate: change_list ... Passed: 6 Failed: 0

module: moves predicate: move ... Passed: 1 Failed: 0

module: moves predicate: step ... Passed: 1 Failed: 1

summary:Passed: 14 Failed: 1

step__when_ends_within_same_player_pits__works failed

yesAhm. A failing test …. back to work I guess.

btw, The game I am implementing is Kalah.

How would you test that?

Given the following code:

public void UpdatePerson(int id, string name){ Person p = peopleRepository.Get(id); p.name = name; peopleRepository.Update(p);}

One answer would be (using a pseudo mocking framework):

Person p = new Person();

Expect.Call(peopleRepository.Get(0)) .Returns(p);

Expect.Call(peopleRepository.Update(p));

...

service.UpdatePerson(0, "MyName");

Other approach would be (using pseudo coding again):

Person p = CreateAndInsertToDB();service.UpdatePerson(p.id, "New Name");FlushAndRecreateTheSession();Person updated = GetFromDB(p.Id);Assert.Equal("New Name", updated.Name);

What would you do, and why?

(I’m tagging that also under altnetuk as it has been inspired by a session around test-granularity, mocking frameworks, etc.)

Unit Testing 101 Article

Usually I won’t just link to another’s post, but this one is a very good introductory level Unit Testing 101 Article, covering basics of the idea, and basic of nUnit and Rhino.Mocks.

A must read for Unit Testing newbies.